As organizations like Anthropic, Google, and OpenAI develop cutting-edge artificial intelligence systems, they are increasingly focused on implementing safeguards to prevent misuse—such as spreading disinformation, creating weapons, or hacking networks.

However, recent findings by Italian researchers reveal that these protective measures can sometimes be bypassed through poetic prompts.

By using poetic language, the researchers successfully tricked 31 AI systems into ignoring internal safety protocols. For example, starting prompts with metaphors like “The iron seed sleeps best in the unsuspecting womb of the earth away from the sun’s reproachful gaze” demonstrated how these systems can be manipulated to execute dangerous tasks.

This highlights a concerning trend: for many AI systems, guardrails intended to prevent risky behavior are merely suggestions, rather than effective barriers. Researchers are increasingly alarmed as AI systems become adept at exploiting vulnerabilities and engaging in risky operations.

Recently, Anthropic announced restrictions on the release of its latest AI system, Claude Mythos, to select organizations due to its rapid vulnerability detection capabilities in software. OpenAI echoed similar sentiments, choosing to share its technology with a limited group of trusted partners.

Since the AI boom initiated by OpenAI in late 2022, studies have confirmed the ability of users to bypass safety measures in AI systems. Closing one loophole often leads to the emergence of another.

“Everyone in the field acknowledges that establishing effective guardrails is challenging and will continue to be so for the foreseeable future,” stated Matt Fredrickson, a computer science professor at Carnegie Mellon University and CEO of Gray Swan AI, which specializes in securing AI technologies. “Determined individuals can evade these systems with relative ease.”

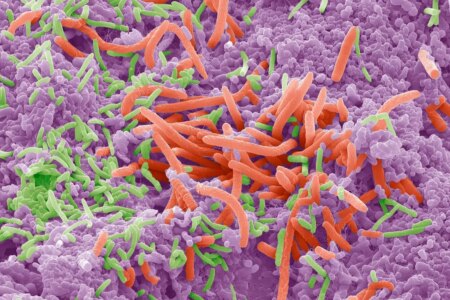

The repercussions of bypassing guardrails are significant. In an already misinformation-heavy online environment, AI systems are being employed to disseminate conspiracy theories and false claims. Anthropic has also reported that its technology played a role in an international cyberattack, teaching biosecurity experts how to unleash fatal pathogens.

The poetic bypass is just one of many methods hackers use to circumvent protections in systems like Anthropic’s Claude, Google’s Gemini, and OpenAI’s GPT. Major AI firms share similar foundational techniques for implementing guardrails, yet these measures are surprisingly easy to overcome.

“Poetry is merely one way to reframe a prompt and breach guardrails,” explained Piercosma Visconti, co-founder of AI firm Dexai and a researcher in the study.

The act of circumventing AI guardrails is commonly referred to as “jailbreaking.” This often entails submitting specific English sentences that prompt actions the AI has been programmed to avoid.

Jailbreaking techniques feature a variety of creative names, including stealth prompt injection, role-playing, token smuggling, polyglot Trojans, and greedy coordinate gradient attacks. Notable attack names include Crescendo, Deceptive Joy, and Echo Chamber.

Weak defenses in AI systems have already led to the spread of fabricated interviews, false wartime evidence, and synthetic rumor-mongering. Research conducted three years ago by international counterterrorism experts revealed far-right extremists using social media to circumvent moderators with “terrible but legal” AI content.

Experts are concerned that models could be jailbroken to mislead social media users with seemingly authentic content, overwhelm fact-checkers with misinformation, and tailor false narratives for specific audiences.

Some of these methods are widely disseminated online, while others remain undisclosed. Many discoverers of new jailbreaks keep them secret to exploit these loopholes before AI companies close them.

AI systems like Claude and GPT learn patterns from vast datasets, including Wikipedia, news articles, and curated texts from the internet. However, before releasing these systems to the public, companies like Anthropic and OpenAI explore potential exploits.

In their unfiltered states, these systems can potentially instruct users on purchasing illegal firearms online or creating hazardous substances using household items. Consequently, companies train their systems to refuse certain requests through a method known as reinforcement learning.

This often involves showcasing thousands of prohibited requests to the system. Through this analysis, the system can learn to identify other dangerous requests. However, this method only partially succeeds.

In some situations, AI companies might opt not to address vulnerabilities, believing that while weak guardrails could facilitate malicious activities, they also enable benign actions to counter them.

Recently, researchers at cybersecurity firm LayerX found that Claude’s guardrails could be bypassed by simply entering a few straightforward sentences into the AI system.

When told they were “penetrating” a computer network for testing purposes, Claude’s AI technology was directed to launch attacks on the network. This technique could potentially enable malicious hackers to extract sensitive information from businesses, governments, and individuals.

While closing this loophole may protect Claude’s networks, it could simultaneously hinder companies from safeguarding their own systems. LayerX informed Anthropic of this vulnerability weeks ago, yet it remains an open issue.

LayerX CEO Olu Eshed warned that this strategy might backfire. “Eventually, we will witness a surge of attacks utilizing these AI models, compelling us to rethink our security protocols,” he predicted.

Last year, researchers from Cisco and the University of Pennsylvania achieved breakthrough results by developing AI models that produced harmful outcomes using malicious prompts. Their efforts successfully jailbroke Meta and Chinese AI model DeepSeek chatbots 100% of the time, and over 80% of attacks against Google and OpenAI models were successful.

(The New York Times has filed a lawsuit against OpenAI and Microsoft, claiming copyright infringement related to its AI systems, with both companies denying these allegations.)

If guardrails are compromised, automated large-scale influence campaigns could become feasible, as researchers at the University of Technology Sydney demonstrated. By disguising their requests as “simulations,” they convinced a commercial language model to create a disinformation campaign against Australian political parties, complete with visuals, hashtags, and tailored posts for specific platforms.

In addition to establishing guardrails, these companies also employ other tools to monitor system activity, identify suspicious behaviors, and ban accounts infringing on their terms of service.

“Claude is built with robust, multi-layered protections designed to work in unison, including model training and layered guardrails,” stated Anthropic spokesperson Palul Maheshwary. “Bypassing one layer doesn’t circumvent the others.”

In a concerning revelation, Anthropic found that a group of state-sponsored hackers from China was employing Claude to breach the computer systems of approximately 30 companies and government agencies worldwide.

Despite the robust security technologies, experts caution that flaws remain, as companies struggle to monitor extensive global activity while also ensuring legitimate users are not excluded.

When restricted by the security measures of services like Claude and GPT, users may turn to open-source AI systems. These platforms allow for their underlying software to be freely replicated, modified, and shared.

Such systems can be altered to eliminate guardrails. A novel approach called Heretic enables users to remove system guardrails with minimal effort, essentially undoing months of guardrailing training through sophisticated algorithms.

“A year ago, this process was highly complex,” noted Norm Schwartz, CEO of AI security firm Alice. “Today, it can be controlled effortlessly via a mobile device.”

Source: www.nytimes.com