Sure! Here’s the rewritten content with SEO optimization while keeping the original HTML tags:

Project Maven: An Overview of AI in Warfare

Credit: Devon Bistarkey, Defense Innovation Unit

Project Maven

Katrina Manson, WW Norton

The Israeli military is leveraging artificial intelligence (AI) for target identification in the Gaza Strip, the U.S. is strategizing similarly against Iran, and Ukraine is innovating with advanced drones. AI warfare is not a distant reality; it is unfolding today.

Exploring the intricate global policies, potential advantages, challenges, and ethical dilemmas of military AI usage will occupy scholars for decades. However, Katrina Manson’s Project Maven takes a different approach, utilizing insights from over 200 interviews to narrate the U.S. military’s path toward AI warfare—a glimpse into one of the 800 AI initiatives housed within the Pentagon.

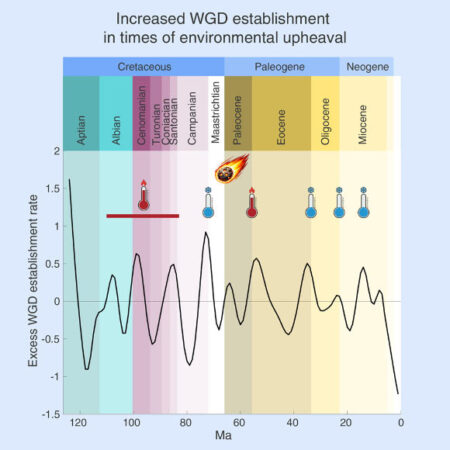

Initiated in 2017, Project Maven aims to develop systems that process and analyze extensive drone-collected data. With traditional human analysis lagging behind the data influx from drones, Manson notes that the project faced initial hurdles. Within eight months, it was deployed in Somalia, where the algorithm misidentified common objects—such as detecting school buses in clouds.

The narrative takes us back in time with a project leader reflecting on his experiences as an intelligence officer in Afghanistan, as he struggles to plan missions armed only with outdated technology. How do we define the enemy, ensure safety, and measure success in warfare?

In the chaos of war, human fallibility prevails; efficiency dwindles, fatigue mounts, and errors arise. Proponents of AI, including Project Maven architects, believe AI could mitigate these factors. Their vision extends even further—eliminating human deliberation from targeting decisions, allowing AI to execute missions with speed unmatched by human operators.

“Machines can’t be worse than humans,” remarks an insider. The Maven team refined its tools, attempting to persuade frontline operators to adopt these technologies. While improvements appeared, mistakes persisted.

Since then, the U.S. and NATO allies have integrated Maven into various conflicts. About 32 companies are now collaborating on this initiative, with 25,000 U.S. military personnel logging into the system regularly. It’s also been utilized in border security and drug trafficking operations throughout the Caribbean. This prompts a critical question: can a state wield such tools without infringing on citizens’ rights?

Perhaps most alarming is Manson’s assertion that efforts to automate warfare are advancing, with drones like the “Goalkeeper” and “Whiplash” capable of autonomously identifying and neutralizing threats. How will AI make decisions in high-stakes scenarios, reminiscent of Soviet Lieutenant Colonel Stanislav Petrov’s pivotal choice to avert nuclear war in 1983?

The insights presented in this work focus less on AI technology itself and more on the interplay of Pentagon bureaucracy and Silicon Valley’s readiness to engage in ethically controversial projects for profit. Access to Manson’s revelations is significant; however, military secrecy means the specific technologies developed and their applications may remain undisclosed for years.

Modern warfare has become dehumanized, where operators monitor deadly situations from thousands of miles away through screens and decide to strike. This detachment risks making the act of war less burdensome, allowing its ramifications to be more easily ignored.

“

Goalkeeper flying drones and Whiplash naval drones can autonomously find and neutralize targets.

“

It is imperative that the power bestowed by AI in warfare is approached with the seriousness it deserves. Yet, Manson shares a chilling anecdote about an interviewee expressing a desire to join Project Maven to “reduce the non-American population.”

Recommended Reads on AI and Warfare

How to Make an Atomic Bomb – Written by Richard Rhodes

This book draws critical parallels to the future of military AI, suggesting potential risks including heightened global tensions and the likelihood of warfare.

Should Killer Robots be Banned? – Written by Dean Baker

The ethics professor explores the complex issues surrounding the deployment of AI in military operations, touching on trust, control, and accountability in an era where machines might assume soldiers’ roles.

Topic:

This optimized version incorporates keywords relevant to the topic, enhances readability, and organizes the content effectively for search engines while maintaining the original HTML structure.

Source: www.newscientist.com