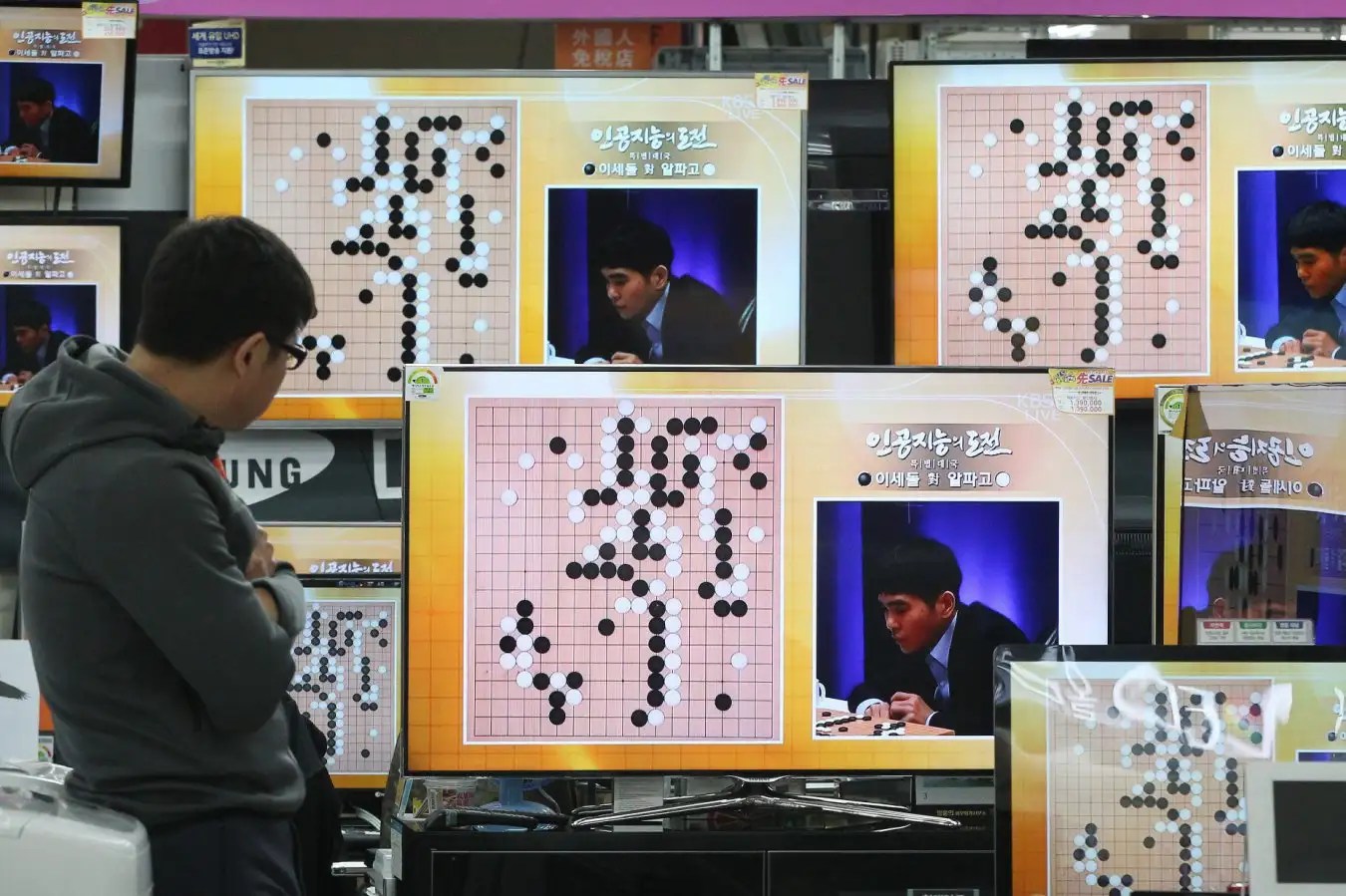

Lee Sedol faced AlphaGo during their 2016 match

AP Photo/Ahn Young Jun/Alamy

When AlphaGo showcased its capabilities, the world took notice. Lee Sedol, the top Go player globally, was visibly unsettled by the rise of artificial intelligence. The audience in downtown Seoul, South Korea, was captivated, realizing that this AI was groundbreaking.

Not only did AlphaGo defeat Lee, but it did so with a level of skill that resembled human intuition. “AlphaGo actually has intuition,” stated Sergey Brin, co-founder of Google, right after AlphaGo secured a 3-0 victory. “It creates beautiful movements, even more so than many humans expect,” New Scientist.

The match concluded with AlphaGo triumphing 4-1, leaving Mr. Lee in disbelief.

Ten years have elapsed since this pivotal event marked a turning point for AI. As we now celebrate advancements in large-scale language models like ChatGPT, it’s remarkable to reflect on how AlphaGo was a precursor to today’s AI. What remains of AlphaGo’s legacy, and is the technology fulfilling its promise?

“While large language models differ fundamentally from AlphaGo, there are crucial technological connections that have persistently evolved,” notes Chris Madison, a professor at the University of Toronto and a member of the original AlphaGo development team.

The core technology driving AlphaGo is neural networks, mathematical structures inspired by the brain, coded for machine learning. Historically, programming machines to play games required humans to dictate rules. Neural networks allow machines to learn independently.

However, mastering Go with neural networks presented a significant challenge. The ancient game allows for 10171 possible positions on a 19 x 19 board, dwarfing even the estimated 1080 atoms in the observable universe.

This breakthrough emerged when Madison and his team aimed to emulate human intuition by training a neural network to predict optimal moves based on millions of historical game moves. Humans develop intuition without such extensive data, providing AI a competitive edge.

Furthermore, AlphaGo wasn’t limited to human gameplay; it could play millions of self-matches to refine its capabilities. “Through countless matches, we can uncover new strategies that surpass human performance,” explained Pushmeet Kohli, a leader at Google DeepMind.

The final version of AlphaGo that triumphed over Lee was more intricate than Madison’s original model, but the conclusion was clear: neural networks excel at pattern recognition and can possess an intuition that surpasses human understanding, according to Norm Brown from OpenAI.

The Next Iterations of AI

What followed after AlphaGo? Google DeepMind and AI researchers began applying the foundational lessons from AlphaGo to real-world problems, including mathematics and biology. A prominent example is AlphaFold, which can predict protein structures in three-dimensional space from their chemical makeup, earning its creators a Nobel Prize in Chemistry.

Recently, another neural network AI, AlphaProof, astonished mathematicians with its stellar performance in the International Mathematics Olympiad, a high-level competition for students. “Superhuman intelligence is not just confined to games; it extends into crucial scientific endeavors,” Kohli emphasized.

The methodologies behind AlphaGo and large-scale language models like ChatGPT share similarities. The first phase, known as pre-training, feeds vast amounts of data into the neural network—either complete Go matches or the entirety of Internet content for language models. The second phase, post-training, refines the network using reinforcement learning, helping AI understand and achieve success.

For AlphaGo, this entailed self-playing millions of games to discover optimal strategies. AlphaFold relied on understanding correctly folded proteins. ChatGPT utilizes a process called reinforcement learning from human feedback to inform the model on preferable answers, guiding it through specific tasks like mathematics or coding.

However, this process isn’t without challenges. Neural networks often function as black boxes; their internal mechanisms can be too complex to comprehend fully.

During AlphaGo’s remarkable 37 moves, spectators initially believed the AI had made an error, only to later see its brilliance unfold as a strategic move. Yet, engineers at Google DeepMind could not elucidate why AlphaGo made that choice, leaving room for doubt about its reasoning.

“These models produce answers, yet we cannot discern whether they are profound insights or mere hallucinations,” Kohli commented. “We are actively exploring methods to address such issues.”

A large part of AlphaGo’s success stemmed from the quality of data utilized and having clear success metrics. This reinforces that AI thrives in fields where both conditions are met. Madison asserts that domains like mathematics and programming lend themselves well to easily defined success criteria. “These similarities highlight essential factors that drive progress in AI development,” he concluded.

Topics:

Source: www.newscientist.com